Cloud-free vs. Cloudless: Why the Line is Blurrier Than You Think

2025-06-01 · 9 min read · Sentinel-2 · Data Fusion · Mosaics · Cloud Masking

TL;DR: “Cloud-free” is meant to be date-anchored, while “cloudless” is a composite built from a time window. Modern fusion can deliver more complete scenes without losing track of when each pixel was actually observed.

Why cloud-free vs. cloudless matters

People often use “cloud-free” and “cloudless” like they mean “nice-looking satellite imagery.” In practice, they describe different promises about time and provenance.

The core question is simple: Do you want pixels tied to a specific acquisition date, or are you comfortable pooling observations from a window to maximize coverage?

That distinction shows up immediately in real work. If your analysis cares about timing (compliance checks, change detection, event mapping), a mixed-date pixel can look perfectly plausible and still be wrong for the question you are asking. If your goal is a clean backdrop for human interpretation, digitizing, or communication, temporal purity is often a secondary concern.

Sentinel-2 is a common baseline for this discussion because it is widely used for land monitoring and frequently composited into basemaps and mosaics. Copernicus Data Space: Sentinel-2 mission overview

Cloud-free vs. cloudless: Definitions that hold up in practice

A useful way to define the terms is by what they promise about provenance.

A cloud-free product is intended to be date-anchored: It is presented as representing a specific acquisition (or a very tight same-day set). In a strict interpretation, a cloud-free scene either (a) leaves gaps where clouds were masked, or (b) marks any reconstructed areas clearly so a user can tell observed pixels from non-observed ones.

A cloudless product is a composite: It is built from multiple acquisitions over a window, and it is optimized for coverage and visual continuity. The composite can be honest and high quality, but it is not trying to answer “What did this place look like on Tuesday?” It is trying to answer “What is a representative, clear view of this place during this period?”

Once you frame it this way, the “blurry line” becomes obvious: Many products that call themselves cloud-free quietly behave like small-window composites, and many “cloudless” mosaics can be configured with narrow windows that behave almost like date-anchored imagery. The label alone is not enough. The metadata is.

What “cloud-free” implies under the hood

Making a date-anchored image usable in cloudy regions forces a set of hard choices, and the choices create the artifacts people argue about.

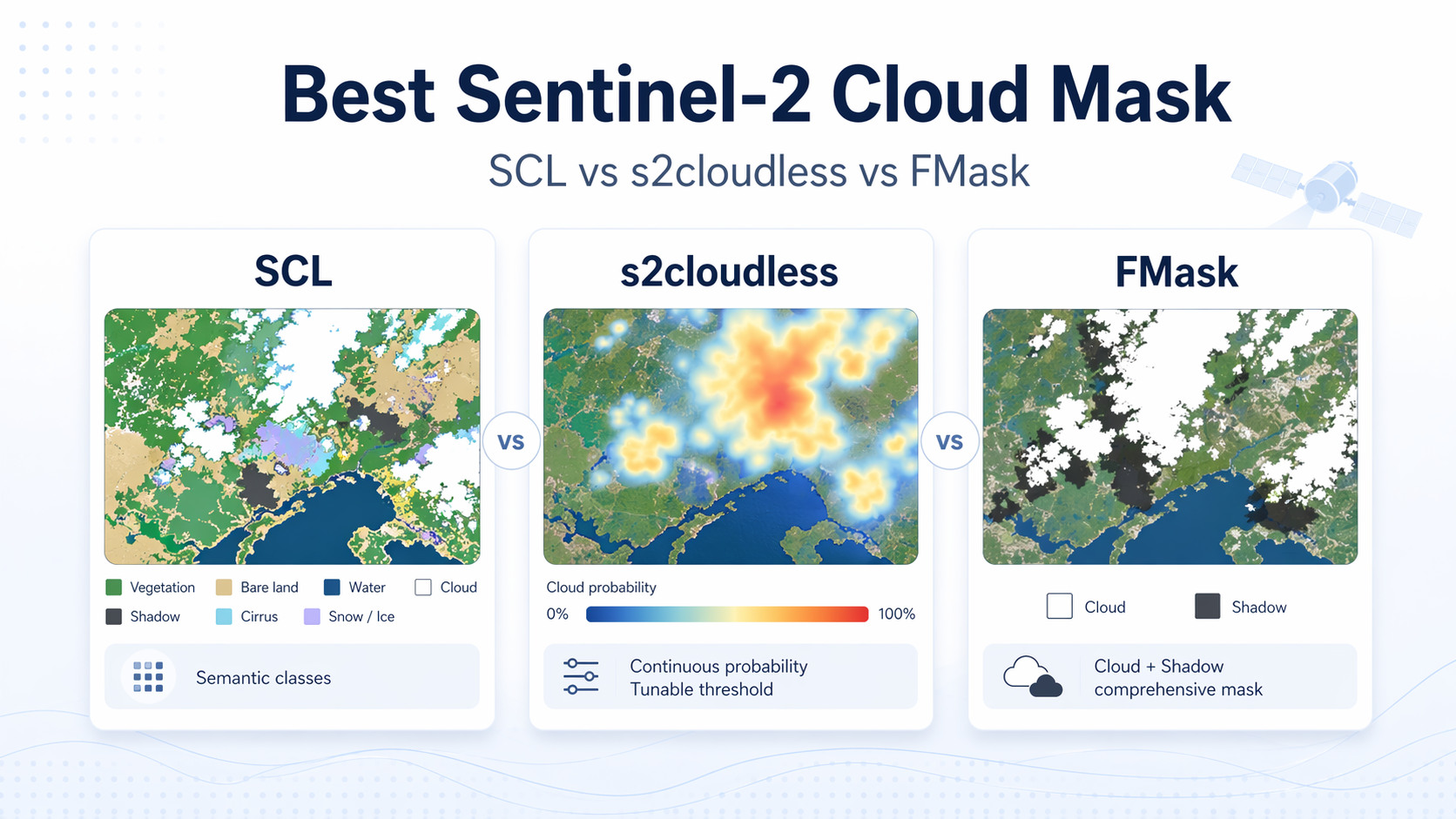

The first step is identifying contamination. Cloud and cloud-shadow detection is not a perfect yes-or-no problem. Thin cirrus, haze, bright roofs, snow, sunglint, and cloud edges all create ambiguous pixels where “mask or keep” depends on probability and thresholds. That is why mature workflows lean on probabilistic classification, context, and conservative buffers rather than pretending every pixel can be cleanly labeled. Zhu & Woodcock (2012): Object-based cloud and cloud shadow detection (Fmask)

The second step is deciding what to do with masked pixels. There are a few common outcomes:

If you only mask, you preserve date fidelity but lose spatial completeness. This is often the right answer for analytics, as long as you can handle gaps.

If you reconstruct, you gain completeness, but you must be explicit about what “reconstructed” means. Some systems fill by copying pixels from other dates (which is compositing, even if the window is small). Others estimate what the surface likely looked like on the nominal date using additional evidence (for example, nearby observations in time or across sensors). These approaches can both produce clean-looking imagery, but they imply different risks and require different provenance to stay honest.

A serious cloud-free delivery is not just an RGB (red, green, blue) image. It is the image plus evidence about what was observed, what was masked, and what was reconstructed.

What “cloudless” means in practice

Cloudless mosaics are built by choosing, for every location, a “best” candidate pixel from a window. The definition of best depends on the goal.

Some pipelines use simple rules like “lowest cloud score” or “highest NDVI (normalized difference vegetation index).” Others use more robust statistics designed to reduce outliers while still choosing pixels that come from real acquisitions, not averaged blends. A well-known example is medoid compositing, where the selected pixel is the most representative sample in a multi-band sense, which helps avoid weird color shifts and outlier contamination. Flood (2013): Medoid compositing for seasonal satellite composites

The reason cloudless products are popular is obvious: They look good and they cover large areas reliably. The cost is temporal mixing. If the window spans meaningful change (crop development, flood recession, harvesting, construction, phenology), the mosaic can become a visually smooth story that never happened on any single day.

This is not a flaw. It is a trade-off. The problem starts when the trade-off is hidden.

How to choose without fooling yourself

If your KPI (key performance indicator) is about timing, start by assuming you need date-anchored imagery, then relax only if you can prove the relaxation does not change your decision.

A good mental test is: If a regulator, a customer, or your future self asked “Which date is this pixel from?”, would you be able to answer without hand-waving?

For time-sensitive analytics, prioritize cloud-free products that expose provenance clearly, even if that means accepting gaps. For mapping, baselayers, and human interpretation, cloudless mosaics often win because completeness and aesthetics matter more than strict time attribution.

The middle ground is increasingly common: Keep a nominal date for the scene, use same-day information as much as possible, and use additional evidence only when needed, while labeling those pixels so downstream users do not mistake them for pure same-day observations.

What modern fusion changes

AI (artificial intelligence) based fusion changes the practical boundary between the two worlds, mostly by giving you more ways to be honest.

First, fusion can reduce the reliance on binary cloud masks. Instead of hard “keep or drop,” you can assign weights to pixels based on cloud probability, haze level, view geometry, or consistency with neighboring observations. That matters because the most damaging errors are not obvious bright clouds. They are the borderline cases: thin cloud, shadow fringes, and haze that still contains usable signal if handled carefully.

Second, multi-sensor fusion can increase same-day coverage. When you harmonize observations across sensors, you can sometimes recover a more complete scene without reaching across many days. NASA’s Harmonized Landsat and Sentinel-2 (HLS) is a public example of cross-sensor harmonization and quality layers meant to support time series work. NASA/LP DAAC: HLS v2 user guide (harmonization and QA bands)

Third, better provenance makes composites and reconstructions safer. If every pixel carries traceable context (such as an acquisition date for observed pixels, plus reconstruction flags and confidence where applicable), a “clean” image stops being a black box. You can filter, audit, and explain instead of hoping.

The result is not that cloud-free and cloudless become identical. The result is that you can get higher completeness while staying honest about time, which is what most downstream decisions actually need.

Validation and provenance you should demand

If you only remember one thing, remember this: The interpretability of cloud handling comes from metadata, not from how clean the image looks.

At minimum, ask for these QA (quality assurance) layers or equivalent fields:

- Per-pixel acquisition date or timestamp so you can measure temporal mixing.

- A reconstruction flag so you can separate observed from inferred pixels.

- A cloud or haze probability score rather than only a hard mask.

- A source indicator (sensor or collection) so cross-sensor blending is auditable.

When you have these, you can validate products in ways that map to real risk: How often did the pipeline need extra evidence, how far from the nominal date did it reach for support, where, and with what confidence? You can also build safe defaults, for example, excluding pixels older than a threshold for certain indicators while still using the full product for visualization.

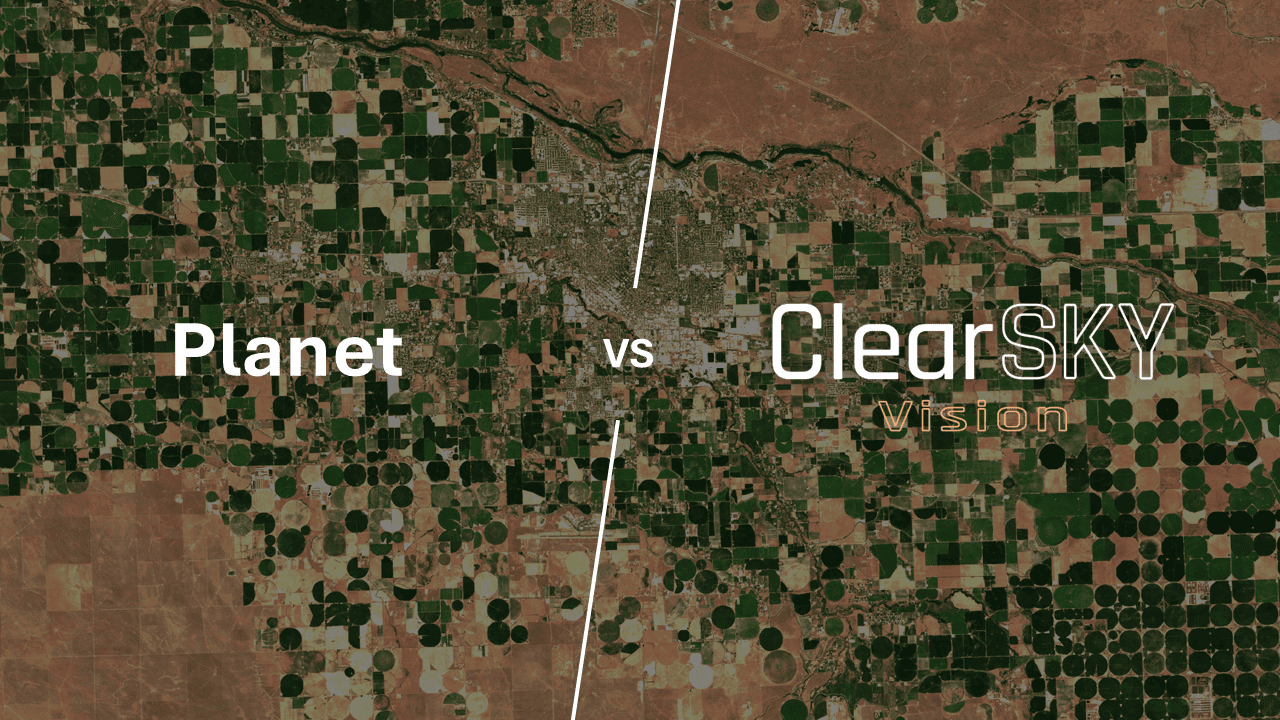

Where ClearSKY fits

ClearSKY aims to sit in the uncomfortable middle on purpose: We want the output to be cloud-free for the user, but we do not want to quietly turn a date-anchored request into a mosaic.

In practice, that means we do not deliver clouds to customers, and we do not assemble scenes by copying pixels from older dates to “patch” the nominal date. Instead, when we need extra evidence, we use historical observations as context to reconstruct what the surface likely looked like on the nominal date. We do not use future observations.

Because this can blur the labels, the practical rule is: If you need strict date purity, treat “cloud-free” as a provenance question, not a visual one. The safest version of cloud-free is the one that lets you audit what was observed, what was reconstructed, and how confident the system is in the result.

FAQ

›Is a cloudless mosaic always better than a cloudy single-date image?

No. If you care about when something happened, a mosaic can mislead even when it looks perfect because different places may come from different dates. For basemaps and visual inspection, mosaics are often the better choice because completeness and continuity matter more than temporal purity. The right answer depends on whether your decision depends on timing or on appearance.

›Can a product be called cloud-free if it uses pixels from other days?

It can be, but only if it is explicit about it. The moment a pipeline pulls from other dates, it needs to expose that provenance, otherwise users will assume every pixel represents the nominal acquisition. If you see “cloud-free” without per-pixel date or reconstruction flags, treat it as an unverified claim rather than a guaranteed property.

›What is the simplest metadata check to separate the two concepts?

Look for a per-pixel acquisition date map or an equivalent provenance field. If every pixel can be tied back to a specific observation time, you can measure temporal mixing and decide whether it is acceptable. If the product only provides a window label like “July 2025 composite” with no per-pixel dates, it is cloudless in the sense that matters for analysis.

›How wide should a cloudless mosaic time window be?

Wider windows usually improve coverage but increase temporal mixing, especially in landscapes that change quickly. Narrow windows preserve realism but may leave gaps in persistently cloudy regions. Methods like medoid selection are designed to produce more representative pixels, but the window size still determines how much change you can accidentally blend.

Flood (2013): Medoid compositing for seasonal satellite composites